In the field of natural language processing and text mining, term frequency and inverse document frequency (TF-IDF) are key concepts that play a crucial role in various language-based applications such as information retrieval and text classification. TF-IDF is a numerical representation of how important a term is to a document or a collection of documents. It is calculated by multiplying the term frequency (how often a term appears in a document) by the inverse document frequency (how common or rare a term is across all documents).

Word embeddings, n-grams, and bag of words are some common techniques used to represent text in a numerical form suitable for further computations. Word embeddings transform words into high-dimensional vectors in a semantic space, capturing the meaning and context of the words. N-grams consider adjacent sequences of n words, capturing the local context and word order. Bag of words ignores word order and only considers the presence or absence of words in a document.

To calculate TF-IDF, a correlation matrix and vector space model are often utilized. The correlation matrix measures the similarity between terms based on their co-occurrence in documents. The vector space model represents documents as feature vectors and computes their similarity using various similarity measures such as cosine similarity. Latent semantic indexing and topic modeling are advanced methods that utilize TF-IDF to discover hidden semantic structures and extract meaningful topics within a document collection.

Inverse document frequency is a crucial component of TF-IDF, as it penalizes terms that occur frequently across all documents. It helps to identify terms that are more specific and discriminative to a particular document or set of documents. Inverse document frequency is calculated as the logarithm of the total number of documents divided by the document frequency (the number of documents in which a term appears).

Stemming, stop words removal, and tokenization are preprocessing techniques commonly applied before calculating TF-IDF. Stemming reduces words to their base or root form to capture variations of a term. Stop words removal eliminates common and uninformative words from a document, such as “and”, “the”, and “is”. Tokenization breaks text into smaller units, such as words or phrases, for further analysis and processing.

Overall, understanding the importance of term frequency and inverse document frequency is essential for effectively processing and analyzing textual data. These concepts serve as the foundation for many language-based applications and methods, enabling us to extract meaningful information, identify patterns, and make informed decisions from large collections of text.

Contents

- 1 What is Term Frequency and Inverse Document Frequency?

- 2 Why are Term Frequency and Inverse Document Frequency important?

- 3 Understanding Term Frequency

- 4 Understanding Inverse Document Frequency

- 5 Importance of Term Frequency and Inverse Document Frequency in Information Retrieval

- 6 FAQ about topic “Mdf/idf: Understanding the Importance of Term Frequency and Inverse Document Frequency”

- 7 What is Mdf/idf?

- 8 Why is term frequency important in Mdf/idf?

- 9 What is inverse document frequency and why is it important?

- 10 How does Mdf/idf calculate the importance of a term in a document?

- 11 Can Mdf/idf be used in other applications apart from information retrieval and text mining?

What is Term Frequency and Inverse Document Frequency?

In the field of natural language processing and text mining, term frequency and inverse document frequency (TF-IDF) are essential concepts for understanding the importance of words in a collection of documents. TF-IDF represents how relevant a term is to a document in a semantic space.

Term frequency is a metric that measures the frequency of a term within a document. It is calculated by dividing the number of times a term appears in a document by the total number of words in that document. This metric helps to identify the significance of a term within a particular document.

Inverse document frequency, on the other hand, measures the importance of a term in the entire collection of documents. It is calculated by taking the logarithm of the ratio between the total number of documents and the number of documents that contain the term. This metric helps to identify the uniqueness of a term across the entire collection.

TF-IDF combines term frequency and inverse document frequency to provide a feature vector that represents the importance of each term within a document and across the entire collection. The feature vector can be used in various applications such as text classification, information retrieval, and document clustering.

To calculate TF-IDF, first, the text needs to be preprocessed by removing stop words, performing tokenization, stemming, and possibly using n-grams to capture the context of terms. Then, the term frequency and inverse document frequency are calculated for each term. Finally, the TF-IDF values are computed by multiplying the term frequency by the inverse document frequency.

The TF-IDF values are often used to build a vector space model where each document is represented as a vector in a high-dimensional space. Dimensionality reduction techniques such as singular value decomposition (SVD) or latent semantic indexing (LSI) can be applied to reduce the dimensionality of the vector space representation. Similarity measures can then be used to compare documents based on their TF-IDF vectors and retrieve relevant information.

TF-IDF is a widely used technique in the field of text mining and natural language processing due to its ability to capture the importance of terms in a document collection. It provides a quantitative measure of the relevance of words and enables effective information retrieval and document analysis.

Why are Term Frequency and Inverse Document Frequency important?

Term Frequency (TF) and Inverse Document Frequency (IDF) are important concepts in the field of natural language processing and text mining. They play a crucial role in various tasks, such as document classification, information retrieval, and text analytics.

TF measures the frequency of a term in a given document or text. It helps in representing the document as a bag of words and provides a numerical value indicating the importance of a term within the document. By considering the TF, we can focus on the most relevant terms and dismiss the less important ones.

On the other hand, IDF measures the rarity or uniqueness of a term in a collection of documents. It helps in determining the importance of a term in the entire corpus and helps in distinguishing between common and uncommon terms. IDF is calculated by taking the logarithm of the inverse of the document frequency, which is the number of documents that contain a particular term.

The combination of TF and IDF is used to calculate the Term Frequency-Inverse Document Frequency (TF-IDF) weight, which is a numerical representation of the importance of a term in a document collection. TF-IDF can be used for dimensionality reduction by constructing a correlation matrix that captures semantic similarities between terms in a vector space model.

The concept of TF-IDF is utilized in various text mining techniques, such as stemming, n-gram analysis, tokenization, word embedding, latent semantic indexing, and topic modeling. TF-IDF enables these techniques to effectively extract meaningful information from text data and provide valuable insights.

Additionally, TF-IDF helps in handling common words, known as stop words, which are often ignored in text analysis due to their high frequency but low informativeness. By considering the IDF, stop words can be assigned lower weights and therefore have less impact on the analysis.

In conclusion, Term Frequency and Inverse Document Frequency are important in text mining and natural language processing as they provide a quantitative representation of term importance, help in dimensionality reduction, and enable various techniques for analyzing text data. By considering TF and IDF, we can effectively extract meaningful information and uncover hidden patterns and relationships in textual data.

Understanding Term Frequency

In the context of text mining and natural language processing, term frequency refers to the number of times a particular term or word appears in a given document or text.

Term frequency is a crucial metric in various text analysis techniques and algorithms, such as the vector space model and document similarity measures. It helps to capture the importance or relevance of a term within a document or corpus.

When analyzing large datasets, it is essential to preprocess the text by performing tasks like tokenization, stemming, and removing stop words. Tokenization breaks the text into individual words or tokens, stemming reduces words to their root forms, and stop words are common words like “the” and “and” that have little semantic value.

Once the text has been preprocessed, term frequency can be calculated for each word. This information can then be used to construct a feature vector, which represents the frequency of each term in the document.

In addition to term frequency, another important factor in text analysis is inverse document frequency. Inverse document frequency measures how often a term appears across multiple documents in a corpus. It helps to identify terms that are more unique or specific to a particular document.

Term frequency and inverse document frequency can be combined to create a weighted term frequency-inverse document frequency (tf-idf) score. This score provides a measure of the importance of a term within a document or corpus, taking into account both its frequency within a document and its rarity across the corpus.

In practice, term frequency and inverse document frequency are often used in conjunction with other techniques like singular value decomposition, dimensionality reduction, and topic modeling to analyze and extract insights from large text datasets. These techniques help to create a semantic space where words and documents can be represented as vectors in a high-dimensional space.

N-gram models and word embeddings are also commonly used in text analysis to capture more contextual information. N-grams represent sequences of N consecutive words, while word embeddings map words to dense vector representations that encode semantic relationships.

Overall, understanding term frequency is essential in text analysis as it forms the basis for many advanced algorithms and techniques. By accurately representing the importance of terms within a document or corpus, valuable insights can be extracted from text data.

Definition of Term Frequency

Term frequency is a crucial concept in the field of natural language processing and text mining. It refers to the frequency or occurrence of a specific term or word in a given document or text corpus. Term frequency is often used as a basic measure to determine the importance or relevance of a term within a document or a collection of documents.

Tokenization is a preprocessing technique used to break down a piece of text into smaller units called tokens. These tokens can be words, phrases, or even individual characters. Stemming is another preprocessing technique that aims to reduce words to their base or root form. Both tokenization and stemming play a vital role in calculating term frequency.

In the vector space model, term frequency is represented as a feature vector, where each dimension represents a unique term and its corresponding frequency. To handle large and sparse matrices, techniques like dimensionality reduction methods such as singular value decomposition (SVD) or latent semantic indexing (LSI) are often applied.

When calculating term frequency, it is also important to consider the document frequency, which refers to the number of documents in which a term appears. This helps in filtering out irrelevant terms and can be done by creating a correlation matrix or using techniques like stop words removal.

Term frequency plays a crucial role in various applications, including topic modeling, where it helps in identifying the main themes or topics within a collection of documents. It is also used in word embedding techniques, such as word2vec or GloVe, which represent words as vectors in a semantic space based on their usage patterns.

In summary, term frequency is a fundamental concept in text mining and natural language processing. It involves techniques such as tokenization, stemming, and dimensionality reduction. It helps in representing text as feature vectors and plays a crucial role in various applications like topic modeling and word embedding.

How is Term Frequency calculated?

The term frequency (TF) is a measure of how frequently a term appears in a document. It is an important concept in the vector space model, which is a dimensional representation of a document collection. TF is calculated by counting the number of times a term occurs in a document and dividing it by the total number of terms in the document.

Dimensionality reduction techniques like stemming, topic modeling, and singular value decomposition (SVD) can be used to reduce the complexity of the TF calculation. Stemming involves reducing words to their root form, topic modeling identifies the main themes in a document collection, and SVD reduces the dimensionality of the vector space representation.

In addition, the TF calculation can also be influenced by the document frequency (DF) of a term. DF is the number of documents in which a term appears. TF-IDF (Term Frequency-Inverse Document Frequency) is a commonly used weighting scheme that takes into account both the TF and the DF to measure the importance of a term.

The TF calculation is an important step in various natural language processing (NLP) tasks such as text mining, tokenization, and word embedding. Text mining involves extracting useful information from a large amount of text, tokenization refers to breaking down a text into individual words or tokens, and word embedding is a technique for representing words as vectors in a semantic space.

To calculate the TF, stop words are often removed from the text. Stop words are common words that do not carry much meaning, such as “and,” “the,” and “is.” Removing stop words helps to improve the accuracy of the TF calculation.

The TF can also be calculated using n-grams, which are contiguous sequences of n words. By considering n-grams instead of individual words, the TF calculation can capture more contextual information and improve the accuracy of the similarity measure between documents.

In conclusion, the term frequency is a crucial factor in understanding the importance of a term in a document. By calculating the TF, we can measure the frequency of a term within a document and use it for various NLP tasks, such as similarity analysis, topic modeling, and information retrieval.

Understanding Inverse Document Frequency

Inverse Document Frequency (IDF) is an important concept in Natural Language Processing (NLP) and plays a crucial role in text mining and information retrieval. IDF is used to calculate the weight of a term in a collection of documents.

In simple terms, IDF measures how important a term is within a corpus. It helps in identifying the rare and important terms that can be used as key features in various NLP tasks.

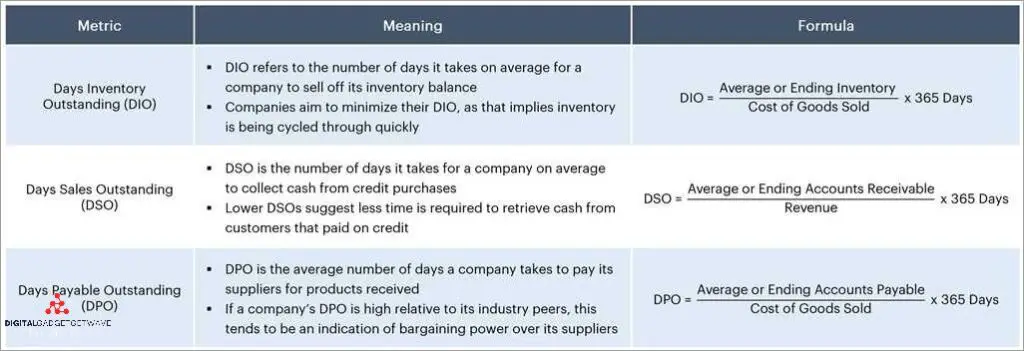

To calculate IDF, we need to consider two important factors: document frequency and total number of documents. Document frequency refers to the number of documents in which a term appears. Total number of documents is the total count of documents in the corpus.

The IDF value is inversely proportional to the document frequency of a term. If a term appears in many documents, its IDF value will be low, indicating that it is not very important or distinctive. On the other hand, if a term appears in only a few documents, its IDF value will be high, indicating that it is rare and important.

The formula to calculate IDF is:

IDF = log(total number of documents / document frequency)

Once we have the IDF values for all the terms in a corpus, we can use them to calculate the weight of each term in a document using the Term Frequency-Inverse Document Frequency (TF-IDF) measure.

IDF is an essential component in various NLP techniques and applications such as text classification, document clustering, and information retrieval. It helps in reducing the dimensionality of the feature vector and improving the accuracy of similarity measures.

By considering IDF, we can overcome certain limitations of term frequency (TF) alone, such as giving too much weight to common terms (stop words) and ignoring rare but important terms. IDF helps in capturing the semantic significance and distinguishing power of terms, making it a valuable tool in text mining and NLP tasks.

Definition of Inverse Document Frequency

Inverse Document Frequency (IDF) is a key concept in text mining and natural language processing. It is used to measure the importance of a term in a document collection or corpus. IDF is often combined with term frequency (TF) to determine the importance of a term within a specific document.

To understand IDF, it’s important to first understand term frequency. Term frequency refers to the number of times a term appears in a document. It is used to create a feature vector, which represents the document in a vector space model. This vector is then used for various text mining tasks, such as similarity measure, clustering, and topic modeling.

On the other hand, IDF measures the rarity of a term across a collection of documents. It provides a weight to each term based on its occurrence across the corpus. The intuition behind IDF is that terms that appear in many documents are less informative, while terms that appear in fewer documents are more informative.

The IDF value for each term is typically calculated using the formula: IDF = log (N / DF), where N is the total number of documents in the corpus and DF is the document frequency of the term, i.e., the number of documents in which the term appears.

By combining term frequency and IDF, we can obtain a more nuanced representation of a document or text, which takes into account both the frequency and rarity of terms. This can improve the performance of various text mining tasks. IDF is often used in conjunction with other techniques, such as stemming, stop words removal, dimensionality reduction (e.g., singular value decomposition or latent semantic indexing), and word embedding.

In summary, IDF is a measure of the importance of a term across a collection of documents. It helps to capture the rareness of a term and is widely used in text mining and natural language processing to improve the representation and analysis of text data.

How is Inverse Document Frequency calculated?

The Inverse Document Frequency (IDF) is an important metric in natural language processing (NLP) and word embedding techniques. It is used to determine the relevance and importance of terms in a collection of documents. IDF is calculated by evaluating the occurrence of a term in the corpus, specifically by finding the ratio of the total number of documents to the number of documents containing the term.

To calculate IDF, we first need to tokenize the documents, which involves splitting the text into individual words or terms. This can be done using various methods such as bag-of-words or n-gram models. Once the documents are tokenized, we can create a correlation matrix or a vector space model to represent the documents in a semantic space.

Next, we analyze the term frequency and document frequency. Term frequency (TF) refers to the number of times a term appears in a document, while document frequency (DF) refers to the number of documents containing a specific term. TF and DF are used to calculate the IDF value for each term in the corpus.

After calculating the IDF for each term, we can generate a feature vector for each document by multiplying the IDF values with the corresponding TF values. This feature vector represents the importance of each term in the document based on its frequency and occurrence in the corpus.

It’s important to note that stop words, which are commonly used words like “and” or “the,” are often excluded from the calculation of IDF as they do not provide much meaningful information. Additionally, techniques like stemming or lemmatization can be applied to further reduce the dimensionality of the feature vectors.

The IDF calculation is a crucial step in various NLP tasks such as text mining, topic modeling, and information retrieval. It helps in identifying key terms or features that are unique and relevant to specific documents, improving the accuracy of similarity measures and document ranking algorithms. Techniques like singular value decomposition (SVD) can also be used on the IDF-weighted feature vectors to further enhance the representation of documents in a lower-dimensional space.

Importance of Term Frequency and Inverse Document Frequency in Information Retrieval

Term Frequency (TF) and Inverse Document Frequency (IDF) are important concepts in information retrieval, which is the process of finding relevant information from a collection of documents. TF measures the frequency of a term in a document, while IDF measures the importance of a term in a collection of documents.

In information retrieval, stopwords are common words that are often excluded from the analysis, as they don’t provide much meaningful information. Text mining techniques, such as word embedding, use TF and IDF to capture the semantic meaning of words in a document.

Document frequency refers to the number of documents in a collection that contain a specific term. It is used to calculate IDF, which is the reciprocal of document frequency. IDF helps to give more weight to rare terms and less weight to common terms.

Dimensionality reduction techniques, such as n-gram and latent semantic indexing, are used to reduce the number of features in a dataset. N-gram involves grouping consecutive words together, while latent semantic indexing uses a mathematical technique called singular value decomposition to identify the underlying semantic structure of a document collection.

Natural Language Processing (NLP) techniques, including tokenization and stemming, are often applied to preprocess the text before calculating TF and IDF. Tokenization involves splitting a document into individual words or tokens, while stemming refers to reducing words to their base or root form.

A feature vector is a numerical representation of a document, which is generated by combining TF and IDF values. These feature vectors are used in various models, such as the vector space model and semantic space model, to calculate the similarity between documents.

Correlation matrix is a useful tool in information retrieval, as it measures the correlation between different terms in a document collection. Similarity measures, such as cosine similarity, are used to quantify the similarity between two documents based on their feature vectors.

Bag of words and topic modeling are techniques that use TF and IDF to represent documents. Bag of words represents a document as a collection of words, ignoring their order, while topic modeling identifies the underlying topics in a document collection using probabilistic models.

In conclusion, term frequency and inverse document frequency play a vital role in information retrieval. These concepts help in capturing the semantic meaning of words, reducing the dimensionality of the dataset, and representing documents in a numerical form. By incorporating TF and IDF, we can improve the accuracy and relevance of information retrieval systems.

How do Term Frequency and Inverse Document Frequency affect search results?

In the field of text mining and natural language processing, term frequency (TF) and inverse document frequency (IDF) play a crucial role in determining the relevance of search results. TF measures how frequently a term appears in a document, while IDF measures the significance of a term across multiple documents.

The TF-IDF score is calculated by multiplying the term frequency and inverse document frequency. This score helps prioritize the importance of a term in a document and in the overall corpus of documents. By incorporating TF-IDF, search engines can provide more accurate and relevant search results to users.

Stop words are commonly used words, such as “a”, “the”, and “and”, that are often excluded from analysis due to their high frequency in most documents. By removing these stop words, the TF-IDF calculation can focus on more meaningful and unique terms that better capture the essence of a document.

Another important concept in text mining is the feature vector, which represents a document as a mathematical vector based on its TF-IDF values. This allows for efficient comparison and ranking of documents based on their similarity to a given query.

N-gram analysis is a technique that considers sequences of n words in a document, providing a more comprehensive understanding of the text structure. This can be helpful in capturing phrases or idiomatic expressions that may not be captured by single words alone.

Latent Semantic Indexing (LSI) is a technique that uses a singular value decomposition (SVD) of the TF-IDF matrix to capture the underlying semantic relationships between terms and documents. This helps in discovering hidden concepts and improving the retrieval accuracy.

Tokenization is the process of breaking down a text into individual tokens or words. It is a crucial step in many text analysis tasks, including calculating TF and IDF values. Stemming is another technique that reduces words to their base or root form, helping to group similar terms together and improve search results.

The bag of words model is a simplified representation of a document that considers the frequency of each term without considering the order or structure of the words. While it may lose some context, it allows for efficient computation and dimensionality reduction in large-scale text analysis.

Dimensionality reduction techniques, such as using a correlation matrix or applying singular value decomposition, help reduce the complexity of a high-dimensional semantic space by capturing the most important and informative features. This improves efficiency and effectiveness in search results.

In conclusion, TF and IDF are fundamental concepts in text mining and play a vital role in determining search result relevance. By considering term frequency, inverse document frequency, and various text analysis techniques, search engines can provide more accurate and meaningful search results to users.

How can Term Frequency and Inverse Document Frequency be used to improve search engine rankings?

Term Frequency and Inverse Document Frequency (TF-IDF) is a powerful technique used in information retrieval and search engine ranking algorithms. By taking into account the frequency of terms in documents and their relevance across a collection of documents, TF-IDF can greatly improve the accuracy and relevance of search engine rankings.

Firstly, TF-IDF utilizes the concept of tokenization, which breaks down text into smaller units (tokens), such as words or phrases. This allows for more accurate analysis of the text, as it focuses on the individual elements of the document. Additionally, TF-IDF incorporates techniques from text mining and natural language processing, such as stop words removal, stemming, and word embedding. These further enhance the accuracy and efficiency of the search engine ranking algorithm.

TF-IDF works by calculating the term frequency (TF) and inverse document frequency (IDF) for each term in a collection of documents. The term frequency measures how often a term appears in a document, while the inverse document frequency measures how important a term is within the entire collection of documents. The correlation matrix built using TF-IDF values provides a similarity measure between documents, which is crucial for search engine rankings.

One technique that can utilize TF-IDF is latent semantic indexing (LSI) or topic modeling. LSI uncovers hidden relationships between words and documents by representing the documents and terms in a higher-dimensional semantic space. This allows for more accurate and contextually relevant search results. Another application is the creation of feature vectors, which represent documents using TF-IDF scores, enabling faster and more precise retrieval of relevant documents.

Additionally, by incorporating inverse document frequency, TF-IDF addresses the issue of term commonness. Common terms, such as “and” or “the,” have high term frequency but low inverse document frequency. TF-IDF gives them lower weights, allowing more weight to be given to less common terms that are more indicative of the document’s topic or content. This helps to improve the precision and accuracy of search engine rankings.

To further enhance the efficiency and effectiveness of TF-IDF, techniques like singular value decomposition (SVD) and dimensionality reduction can be applied. SVD reduces the dimensionality of the TF-IDF matrix, improving computation speed and eliminating noise. Dimensionality reduction techniques, such as n-gram models and vector space models, further refine the relevance ranking by considering the relationships between terms and documents.

In conclusion, Term Frequency and Inverse Document Frequency play a vital role in improving search engine rankings. By accurately measuring the frequency and importance of terms in documents, TF-IDF enables more relevant results, enhances the understanding of document relationships, and improves the overall quality of search engine rankings.

FAQ about topic “Mdf/idf: Understanding the Importance of Term Frequency and Inverse Document Frequency”

What is Mdf/idf?

Mdf/idf stands for term frequency-inverse document frequency. It is a technique used in information retrieval and text mining to measure the importance of a term within a document or a corpus of documents. The term frequency (tf) measures the number of times a term appears in a document, while the inverse document frequency (idf) measures the rarity of the term within the corpus.

Why is term frequency important in Mdf/idf?

Term frequency is important in Mdf/idf because it reflects how often a term appears in a document. A higher term frequency indicates that a term is more important and relevant to the document. This helps in ranking documents based on their relevance to a given query.

What is inverse document frequency and why is it important?

Inverse document frequency (idf) measures the rarity of a term within a corpus of documents. It is important because it helps in determining the importance of a term in the entire corpus. Terms that appear in only a few documents are considered more important and receive a higher idf weight. This helps in distinguishing between common terms and rare terms.

How does Mdf/idf calculate the importance of a term in a document?

Mdf/idf calculates the importance of a term in a document by multiplying its term frequency (tf) with its inverse document frequency (idf). This product gives a weight to the term that represents its importance. The higher the weight, the more important the term is in the document.

Can Mdf/idf be used in other applications apart from information retrieval and text mining?

Yes, Mdf/idf can be used in other applications apart from information retrieval and text mining. It is commonly used in natural language processing, document classification, document clustering, and text summarization. The technique is versatile and can be applied to various tasks that involve analyzing textual data.