When it comes to measuring time intervals, understanding the difference between microsecond and millisecond is crucial. These units of time are used in various fields, such as computer programming, physics, and telecommunications, where accurate timing and measurement are essential.

The main difference between the microsecond and millisecond is the scale of time they represent. A microsecond is one millionth of a second, while a millisecond is one thousandth of a second. This means that a microsecond is a smaller unit of time and measures shorter intervals, while a millisecond is a larger unit and measures longer durations.

So when should you use each unit? It depends on the specific context and requirements. If you need to measure or benchmark the performance or speed of a process that happens very quickly, such as a computer operation or electronic signal, using microsecond timing would be more appropriate. Microsecond measurements provide high accuracy and resolution, allowing for precise analysis and optimization.

On the other hand, if you are dealing with events or processes that occur at a slower pace, such as human reaction times or network latency, millisecond timing is more suitable. Millisecond measurements still offer a good level of accuracy and can capture most human interactions and communication delays, which typically fall within this time range.

Overall, the choice between using microsecond or millisecond timing depends on the specific requirements of your application. Understanding the difference and considering the scale and precision needed will ensure accurate and relevant measurements for your project. Whether you need to optimize performance or analyze human interactions, choosing the right time unit is crucial for obtaining valuable insights and improving outcomes.

Contents

- 1 What is a Microsecond?

- 2 What is a Millisecond?

- 3 When to Use Microsecond vs Millisecond

- 4 FAQ about topic “Microsecond vs Millisecond: Understanding the Difference and When to Use Each”

- 5 What is the difference between a microsecond and a millisecond?

- 6 When should I use a microsecond instead of a millisecond?

- 7 When should I use a millisecond instead of a microsecond?

- 8 Are there any practical examples where the difference between microsecond and millisecond is crucial?

- 9 Can you provide a more detailed explanation of how microsecond and millisecond timing is used in high-frequency trading?

What is a Microsecond?

A microsecond is a unit of time measurement that is even smaller than a millisecond. It is equal to one millionth of a second, or 0.000001 seconds. Microseconds are commonly used in the field of computer science and electronic engineering to represent extremely small intervals of time with high precision.

In terms of timing and delay, microsecond is a scale used to measure the speed and performance of various devices and systems. It provides a level of resolution and accuracy that is often necessary for tasks that require precise timing, such as in benchmarking, comparison, and latency measurements.

The use of microseconds as a unit of time allows for the measurement of shorter durations and faster processes than would be feasible with larger units like milliseconds. This is particularly important in fields such as telecommunications, robotics, and high-frequency trading, where quick response times and precise timing are crucial for optimal performance.

Due to its small scale, a microsecond can be difficult to visualize or comprehend. To put it into perspective, consider that one second is equivalent to 1,000 milliseconds or one million microseconds. The use of microsecond as a unit allows for a much finer level of resolution in time measurement compared to larger units like milliseconds.

Overall, a microsecond is an important unit of time measurement that offers a high level of precision and accuracy. Its use is particularly relevant in fields where timing and speed are critical, enabling the precise measurement and control of processes that operate at incredibly fast rates.

Definition

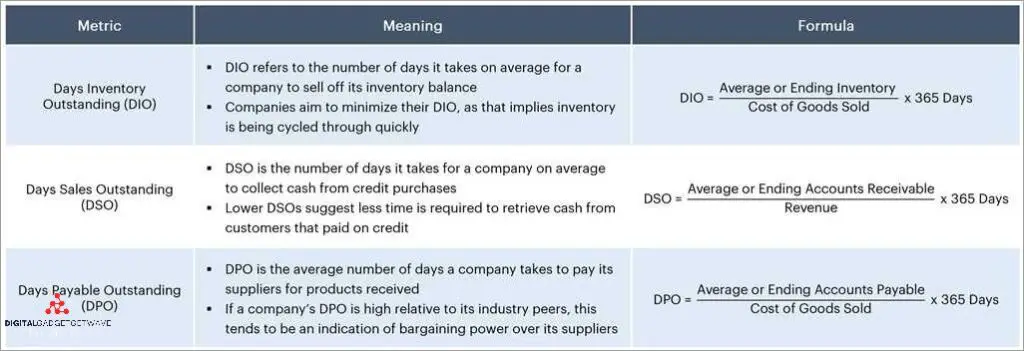

Difference: The difference between microseconds and milliseconds lies in the scale of time they measure. Microsecond is a unit of time that is one millionth of a second, while millisecond is a unit of time that is one thousandth of a second.

Accuracy and Precision: Microsecond and millisecond measurements differ in terms of accuracy and precision. Microseconds provide a higher level of accuracy and precision compared to milliseconds due to their smaller measurement interval.

Timing and Measurement: Microseconds are often used in high-performance systems and critical applications that require precise timing measurements. Milliseconds are commonly used in everyday applications where slightly lower accuracy and precision is acceptable.

Benchmark and Performance: The choice between microseconds and milliseconds depends on the benchmark and performance requirements. Microsecond-level timing is crucial in industries such as finance, telecommunications, and scientific research, where even small delays can drastically impact performance.

Latency and Delay: When it comes to measuring latency or delay, microseconds offer a finer resolution compared to milliseconds. For example, in network communication, measuring the delay in microseconds can provide more detailed insights into the performance of the network.

Speed and Duration: Microseconds are used to measure very short durations, such as the time it takes for a computer processor to execute an instruction or the response time of electronic components. Milliseconds, on the other hand, are often used to measure longer durations, such as the time it takes to perform a task or receive a response from a remote server.

Comparison and Interval: When comparing the performance of two systems or actions, microseconds can provide a more precise measurement interval compared to milliseconds. It allows for a more accurate assessment of the speed or efficiency of the system or action being evaluated.

Summary: In summary, the difference between microseconds and milliseconds lies in their scale of time measurement, accuracy, precision, and usage. Microseconds offer a higher level of accuracy and precision, making them suitable for critical, high-performance systems that require precise timing measurements. Milliseconds are commonly used in everyday applications where slightly lower accuracy and precision is acceptable. The choice between microseconds and milliseconds depends on the specific requirements and benchmarks of the application at hand.

Usage in Technology

The difference between microseconds and milliseconds is crucial in the field of technology, where accuracy and precision are paramount. Microseconds are often used for benchmarking and performance measurements, especially in high-speed systems where even the slightest delay can have a significant impact.

Microseconds are the preferred unit of measurement when timing intervals that require a high level of resolution and precision. For example, when measuring the latency of a network connection or the duration of a microprocessor instruction, using microseconds allows for a more accurate comparison and analysis.

On the other hand, milliseconds are commonly used for measuring larger intervals of time in technology. They are often used for measuring the response time of a user interface or the processing time of complex algorithms. Milliseconds provide a larger scale for measuring time and are more suitable for measuring events that occur at a slower pace.

When it comes to performance optimization, milliseconds are often used as a benchmark for measuring the speed and efficiency of software and hardware systems. By reducing the processing time in milliseconds, developers can ensure faster and more efficient performance.

In summary, the choice between microseconds and milliseconds depends on the specific use case and the level of accuracy required. Microseconds are typically used for measuring short intervals with high precision, while milliseconds are used for measuring larger intervals and for performance optimization at a coarser level. Understanding the difference between these two units of time is essential for ensuring accurate timing and achieving optimal performance in technology-driven systems.

What is a Millisecond?

A millisecond is a unit of time measurement in the interval of milliseconds, which is equivalent to one thousandth of a second. It is commonly used to describe the timing, speed, and duration of various processes or actions.

In terms of performance and comparison with other units of time, a millisecond is faster than a microsecond but slower than a second. It provides a level of precision and accuracy that is suitable for many everyday measurements and applications.

Millisecond timing is often used in various fields, including computer science, electronics, telecommunications, and physics. It is particularly important in situations where small delays or differences in timing can significantly impact the overall performance or functionality of a system.

With millisecond measurements, it becomes possible to understand and analyze the latency or delay between different events or actions. This can help identify bottlenecks, optimize performance, and improve the overall speed and efficiency of a system.

To ensure accurate and reliable measurements, millisecond benchmarks are often used as a standardized reference point. These benchmarks provide a common basis for comparison across different systems, allowing for a fair assessment of performance and ensuring consistent results.

The resolution and scale of millisecond measurements allow for a wide range of applications. Whether it’s measuring the response time of a website, the speed of a computer processor, or the duration of an electrical pulse, the millisecond unit provides the necessary level of detail and precision.

Definition

Timing refers to the measurement or indication of the time taken for a process or event to occur.

A microsecond is a unit of time equal to one millionth (10^-6) of a second. It is commonly used to measure small intervals of time on a microscale.

An interval is a specific length of time defined by a starting point and an ending point.

A millisecond is a unit of time equal to one thousandth (10^-3) of a second. It is commonly used to measure slightly larger durations of time compared to microseconds.

Benchmark is a standard or point of reference for measuring and comparing the performance of a system or process.

Resolution represents the smallest unit of time that can be measured accurately by a particular timing system.

Duration refers to the length of time that an event or process takes to complete.

Speed refers to the rate at which something moves or occurs over a given period of time.

Performance relates to how well a system or process is functioning or executing tasks within a specific timeframe.

Time is a continuous and irreversible progression of events occurring in the past, present, and future.

Accuracy measures the conformity of a measurement or result to the true or expected value.

Faster indicates a higher speed or rate of doing something in comparison to something else.

Latency refers to the delay or time lag between the initiation and execution of a command or process.

Precision is the level of detail or exactness with which a measurement or result is represented.

Delay refers to the period of time between a stimulus and its response or between a cause and its effect.

Usage in Technology

In the field of technology, timing and performance are crucial. The duration or interval of a task, the latency or delay in communication, and the difference in speed can have a significant impact on the overall performance of a system.

Microseconds and milliseconds are commonly used units of measurement to quantify time in technology. Microseconds are one millionth of a second, while milliseconds are one thousandth of a second. The scale of measurement between the two is considerable, and understanding when to use each is essential for optimizing system performance.

When it comes to measuring the speed or latency of a system, microseconds are the preferred unit. With their higher resolution and accuracy, microseconds enable precise benchmarking and analysis. They allow for the identification and optimization of bottlenecks, ensuring faster response times and improved overall system performance.

On the other hand, milliseconds are often used for tasks that do not require as high of a time resolution. These tasks may include user interactions, file operations, or network communication. While milliseconds offer a coarser level of measurement, they are still sufficient for most applications. Their larger unit size makes them more suitable for capturing longer durations or delays.

Overall, the choice between microseconds and milliseconds depends on the specific requirements of the task at hand. If precise timing and faster response times are crucial, microseconds should be used. If a larger time scale or general measurement is sufficient, milliseconds would be the preferred unit. Having a clear understanding of the differences and appropriate use cases for each unit of time measurement is essential in optimizing technology systems.

When to Use Microsecond vs Millisecond

The choice between using microsecond or millisecond as a time unit depends on the specific requirements of your application and the level of precision or accuracy needed. Both microsecond and millisecond are units of time measurement, but they differ in terms of their speed, resolution, and performance.

Microsecond, as the name suggests, is a much smaller unit of time compared to a millisecond. It is a millionth of a second, while a millisecond is only a thousandth of a second. Microsecond timing offers a much faster rate of measurement, making it suitable for scenarios that require high-speed and low-latency performance. For example, in high-frequency trading systems, where timing precision is crucial, using microsecond timers allows for more accurate tracking of fast market fluctuations.

On the other hand, millisecond timing is more appropriate for applications that don’t require the same level of precision or faster measurement interval. Milliseconds provide a coarser level of measurement and can be more suitable for scenarios such as user interface interactions, animations, or processing batch jobs. These applications can often tolerate a slightly longer delay or slower response time, and the use of milliseconds as a timing unit provides a good balance between precision and performance.

When comparing microsecond and millisecond timers, it’s important to consider the specific use case and the requirements of the application. Microseconds offer higher precision and accuracy, but they require more computing resources and may not be necessary for every scenario. Milliseconds, on the other hand, are more widely used and can provide adequate timing for many applications at a lower cost in terms of resources and performance impact.

In conclusion, the choice between microsecond and millisecond timing depends on the specific needs of your application. If you require high-speed and high-precision measurement, microsecond timing is recommended. However, if a slightly lower level of precision is acceptable and you want to optimize performance and resource usage, millisecond timing is a suitable option. Consider the nature of your application and the desired time scale, and choose the appropriate timing unit accordingly.

Factors to Consider

When deciding whether to use microsecond or millisecond timing, there are several factors to consider:

- Delay and Interval: Microsecond timing is often used when precise timing is required, such as in scientific experiments or real-time systems where short intervals between events need to be measured accurately. Millisecond timing, on the other hand, is more suitable for applications where the delay between events is longer and less critical.

- Resolution and Accuracy: Microsecond timing offers higher resolution and accuracy compared to millisecond timing. If you need to measure time intervals with great precision, microsecond timing is the better choice.

- Performance and Speed: Microsecond timing can provide faster response times and higher performance compared to millisecond timing. If your application requires quick and precise timing measurements, microsecond timing can be beneficial.

- Latency: Microsecond timing can help minimize latency in applications that require real-time responsiveness. Millisecond timing may introduce noticeable delays, especially in time-sensitive scenarios.

- Benchmark and Comparison: If you are comparing the performance of different systems or conducting benchmark tests, microsecond timing can provide more detailed and accurate results compared to millisecond timing.

- Duration and Time Scale: Microsecond timing is ideal for measuring very short durations, while millisecond timing is more suited for longer time intervals. Consider the scale of time you need to measure when deciding between microsecond and millisecond timing.

- Unit of Measurement: Microseconds and milliseconds are different units of measurement. Make sure to use the appropriate unit for your specific timing needs.

By considering these factors, you can choose between microsecond and millisecond timing based on the specific requirements and constraints of your application.

Real-Life Examples

In real-life scenarios, the difference between microseconds and milliseconds becomes crucial when dealing with time-sensitive operations. Here are a few examples to illustrate the importance of this distinction:

- High-Frequency Trading: In the stock market, microseconds can make a significant difference in terms of trading competitiveness. Traders use sophisticated algorithms to execute trades in microseconds, aiming to take advantage of even the slightest price fluctuations.

- Telecommunication Networks: In telecommunication networks, millisecond-level latency can lead to noticeable delays in voice and video communication, affecting the user experience. Microsecond-based timing is essential for achieving faster transmission speeds and reducing network latency.

- Computer Gaming: In fast-paced online gaming, millisecond-based timing is crucial for maintaining smooth gameplay. A delay of just a few milliseconds can result in missed actions or slower response times, impacting the overall gaming experience.

- Scientific Research: In fields like physics and astronomy, measurements taken at the microsecond level are necessary for capturing rapid events or analyzing high-speed phenomena. This level of precision enables researchers to make accurate observations and draw meaningful conclusions.

- Performance Benchmarking: When benchmarking computer systems or software performance, measuring operations in microseconds versus milliseconds can reveal significant differences in speed and efficiency. Microsecond-level measurements provide a more granular perspective on performance at a smaller scale.

Overall, understanding the distinction between microseconds and milliseconds is crucial for various industries and applications that require precise timing and fast operations. Whether it’s for achieving high-performance levels, reducing latency, ensuring accuracy in measurements, or delivering seamless user experiences, choosing the appropriate time scale, from microseconds to milliseconds, can make a significant difference.

FAQ about topic “Microsecond vs Millisecond: Understanding the Difference and When to Use Each”

What is the difference between a microsecond and a millisecond?

A microsecond is one millionth of a second, while a millisecond is one thousandth of a second. So, a microsecond is a much smaller unit of time compared to a millisecond.

When should I use a microsecond instead of a millisecond?

You should use a microsecond when you need to measure or control very small time intervals. This can be useful in applications such as high-frequency trading, where even small differences in time can make a significant impact. In other words, if you require very precise timing, microseconds would be the appropriate choice.

When should I use a millisecond instead of a microsecond?

You should use a millisecond when you need to measure or control larger time intervals. This can be useful in applications such as animation, where milliseconds are commonly used to control the timing of frames. In general, if you need to measure or control time intervals that are larger than a few microseconds, milliseconds would be more practical.

Are there any practical examples where the difference between microsecond and millisecond is crucial?

Yes, there are several examples where the difference between microsecond and millisecond is crucial. One such example is in the field of scientific research, where experiments often require precise timing in order to accurately measure phenomena. Another example is in the field of telecommunications, where the speed of data transmission can be affected by even small differences in timing.

Can you provide a more detailed explanation of how microsecond and millisecond timing is used in high-frequency trading?

In high-frequency trading, microseconds are used to measure the time it takes for data to travel through a network, execute a trade, and receive a confirmation. Traders use sophisticated algorithms to analyze market conditions and execute trades within a fraction of a second. By utilizing microseconds, traders can gain a competitive advantage by being able to react to market changes faster than their competitors. Milliseconds, on the other hand, may be too slow for high-frequency trading, as even millisecond delays can result in missed opportunities.